How I Think About AI Agents

Why the "People vs Process" Debate Was Always the Wrong Question — and Why Agentic Systems Finally Prove It

Watch the talk that prompted this article: Daniel Miessler — Anatomy of an Agentic Personal AI Infrastructure | [un]prompted 2026

More on what I'm building: ganderai.co.uk

I watched Daniel Miessler's talk at [un]prompted 2026 — Anatomy of an Agentic Personal AI Infrastructure — and it resonated more than I expected.

Miessler has built a system where specialised agents with distinct skills, persistent memory, and self-improving workflows operate around a single human's goals. Context drives everything. The system learns. It adapts. It gets better.

What Daniel is doing on a personal scale, I've been building on the enterprise scale. In many ways they're the same problem. We've arrived at the same core principles from completely different starting points.

The Old Argument

A few years back, during an internal review of why major programmes were failing, a colleague and I disagreed over people versus process.

I believe in agile — done right. The problem is most organisations don't do it right. Agile is about iteration, taking small bites out of a large cake. But you still have to understand the size of the cake and what's been ordered, otherwise someone's going hungry.

The issue is scale. With multiple suppliers working on complex interdependent systems, you need context and a common understanding of desired outcomes. Without that, programme leadership skips requirements sign-off, bypasses high-level design, and then wonders why suppliers built the wrong thing.

For agile to work at scale, you need both great people and disciplined governance. My colleague's view: process was the drag, and the right people with the right attitude would find the way. I understood the frustration — but I'd seen the cost when governance is treated as optional.

We were both right. Bad process kills motivation. And without structured governance at scale, you get chaos dressed up as agility.

The process itself is the governance. Think TDD or BDD — you define the outcome before you write the code. Everyone shares a common understanding of what "done" looks like before anyone starts building. That's not overhead. That's how you avoid building the wrong thing.

AI agents are how you make that work without it feeling like a burden.

Most People Are Thinking About AI Wrong

Most organisations still think AI is ChatGPT. They see the value as productivity improvements — write emails faster, summarise documents, generate code snippets. Useful, but incremental.

That's not what's going to reshape how organisations work.

The real shift is individuals working with agents that understand context — about the person, the business, and the outside world. Agents that don't just respond to prompts but participate in how work gets done, how decisions get made, and how knowledge flows through an organisation.

That's a different proposition from a chatbot that drafts your meeting notes.

Daniel Miessler gets this. His [un]prompted talk wasn't about making ChatGPT more useful. It was about building an agentic infrastructure where AI operates as a persistent, context-aware partner — not a stateless tool you poke when you need something.

What Miessler Got Right — and Where It Gets Bigger

His Personal AI Infrastructure is built on a principle that sounds simple but is architecturally profound: orient the system around the human's goals, not the tooling.

His agents have defined skills and explicit boundaries. They carry context between sessions through layered memory and his TELOS framework — purpose, mission, goals, strategies — so the AI starts every interaction knowing what success looks like. They route tasks intelligently, capture what works, check their own output, and improve their own behaviour.

This is process — encoded, executed, and continuously refined — without the drudgery that makes humans resist it. The scaffolding does the governing. The human does the thinking.

I've been working on the same principle at organisational scale — independently, from the enterprise side of the problem.

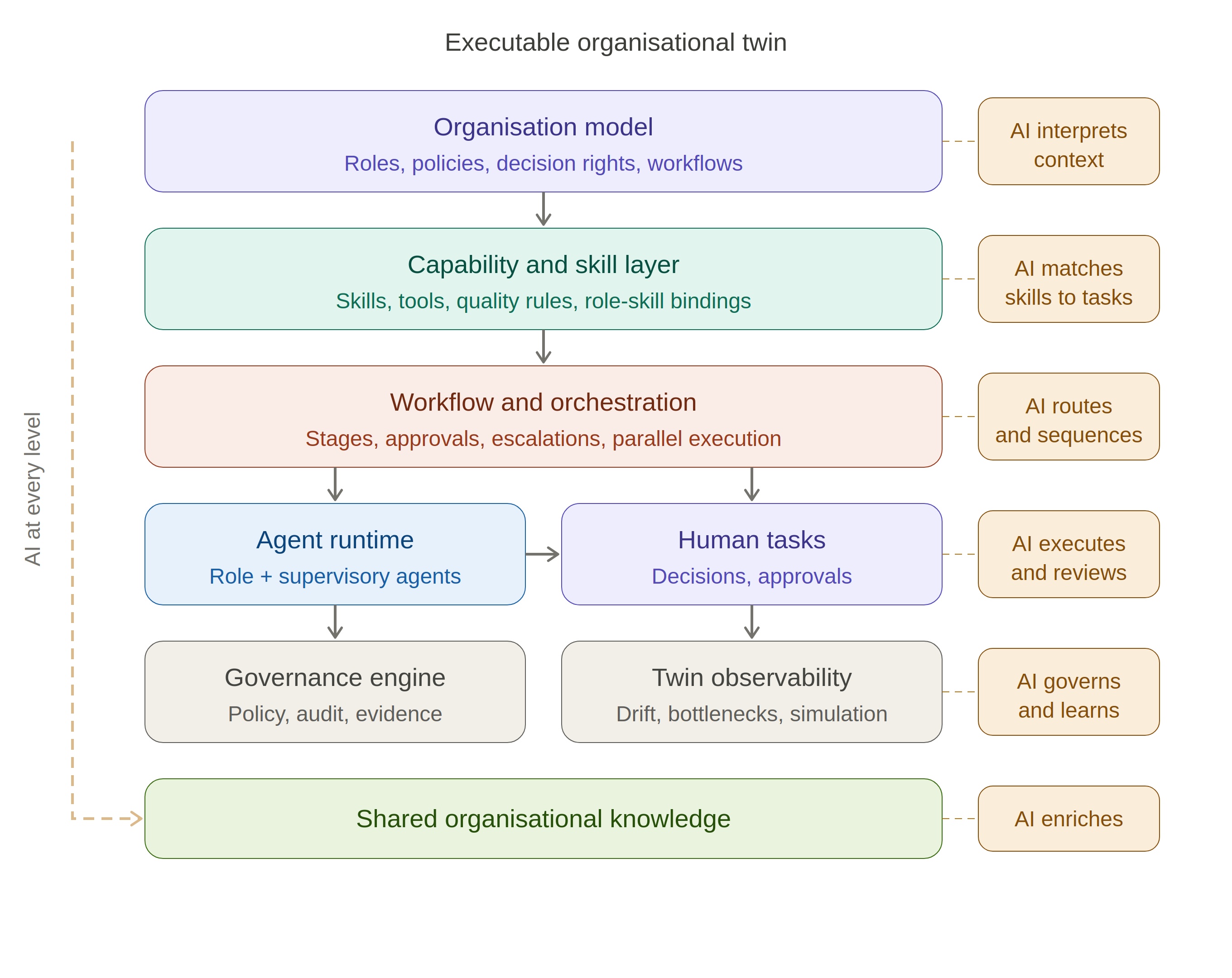

What if every role — architect, business analyst, delivery lead, security reviewer — had an agentic counterpart that understood the organisation's operating model? Not a chatbot. An agent that knows the governance rules, approval thresholds, quality standards, and decision rights. An agent that assembles the right context, routes work through the correct stages, flags gaps, and produces structured, auditable outputs at every step.

This is what I've been building: an executable digital twin of the organisation's operating model. Roles, workflows, policies, and decision rights as composable building blocks. Human and agent roles mixed. Governance as the fabric everything runs on, not a gate you pass through reluctantly.

From Personal Infrastructure to Organisational Hive

The architectural parallels between Miessler's PAI and the organisational twin I've been building are convergent design. Two systems, built from opposite ends of the scale spectrum, arriving at the same insight: agents without context produce noise; agents with structured context produce outcomes.

Context as the foundation. Miessler's TELOS gives his agents purpose and direction at the start of every session. In an organisational twin, every agent needs the same grounding — what workflow is this part of, what policies apply, what's been decided, what artefacts are required, who has authority to approve. Without this, agents produce plausible outputs that are organisationally wrong. With it, they do the right thing the first time.

Bounded autonomy, not free rein. Miessler's sub-agents have specific skills and operating parameters. They don't wander into tasks they weren't assigned. In an organisational twin, this becomes a tiered decision model — low-risk decisions executed within policy, medium-risk recommended by agents and approved by humans, high-risk owned by humans with agents providing evidence and analysis. Governance that scales without friction.

Continuous learning from execution. Every interaction in Miessler's system feeds back into its evolving memory. At the organisational level, runtime telemetry captures how work actually flows versus how it was designed to flow. Drift detection identifies divergence from policy. Bottleneck analysis reveals where the model needs to change. The twin observes itself and recommends improvements.

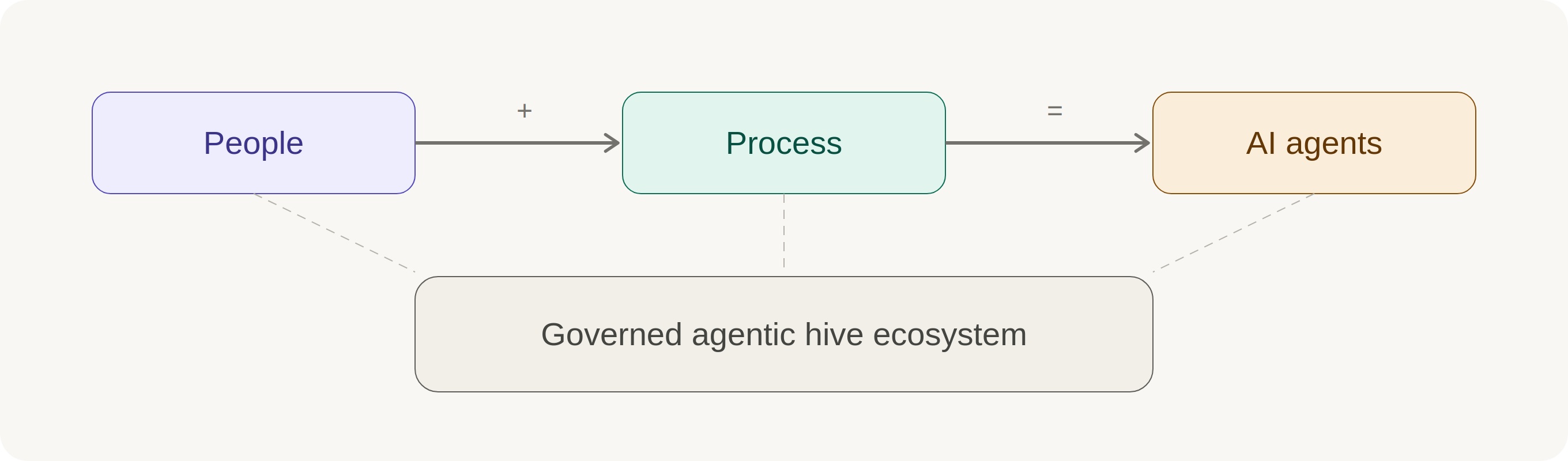

Everyone interfaces through agents and their skills. This is the shift most organisations haven't grasped. In an agentic enterprise, the operating model isn't a document on SharePoint. It's the running system that shapes how every piece of work gets done. Everyone — human and machine — operates within the same shared organisational truth. The organisation becomes a living, agentic hive ecosystem.

Governed Agents vs Ungoverned Agents

This is where my old argument becomes relevant again.

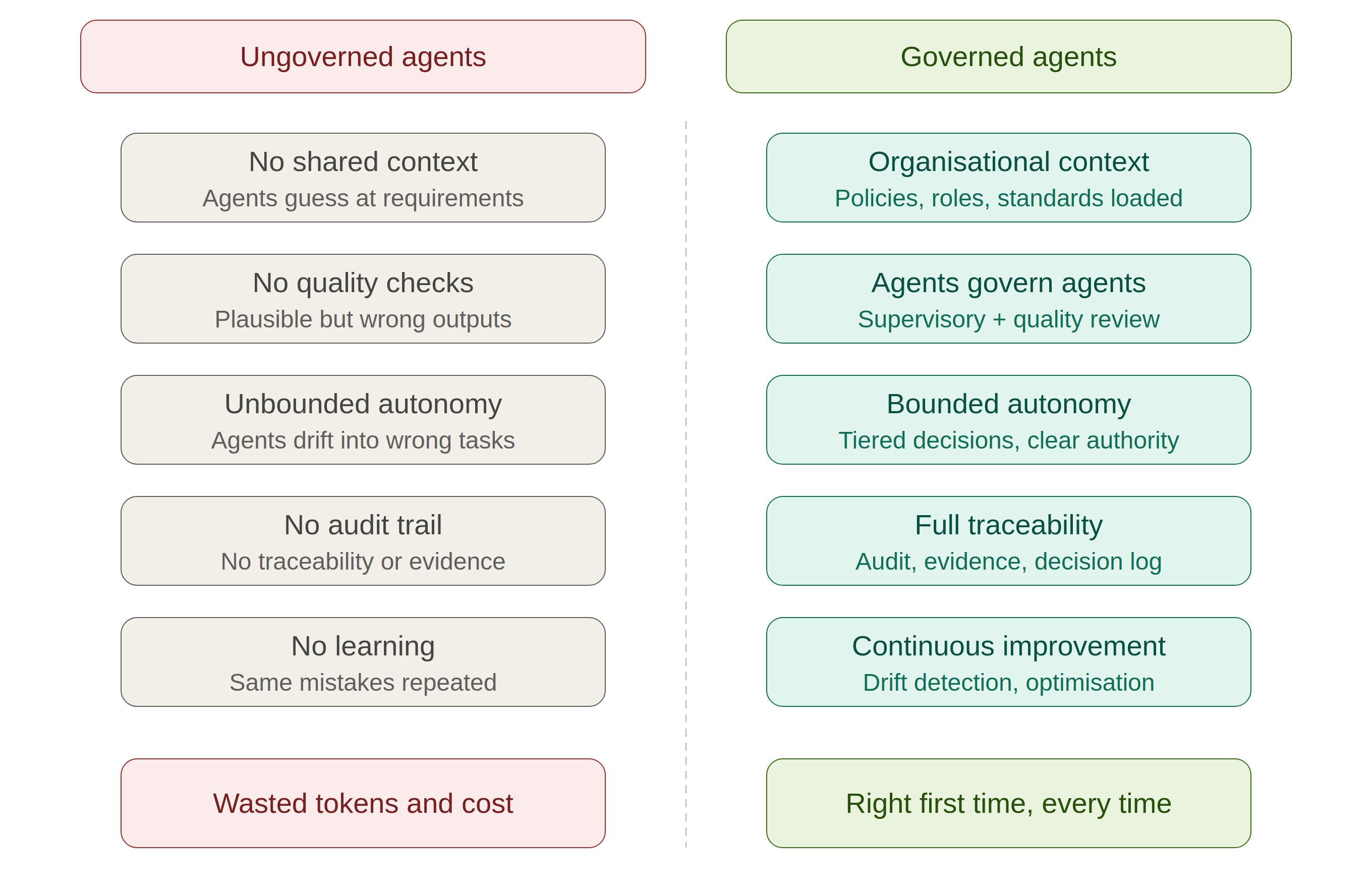

The excitement around AI agents is growing fast. And I see a familiar pattern: people want to skip the work of defining what agents should do, how they should be governed, and what "good" looks like. Deploy agents, hope for the best.

This will produce the same problems those programmes had — faster and more expensively. Ungoverned agents don't just waste time. They burn through tokens, produce unreliable work, and cost the organisation thousands before anyone realises the outputs are wrong. They'll optimise locally while creating problems elsewhere.

The work has to be discrete and testable. Every agent task, every workflow step, every governance check needs to be independently verifiable. Fine-grained process definitions keep agents on track. Reputable, tested methodologies ensure the right outcome. If you can't test it, you can't trust it. If you can't trust it, you can't scale it.

Critically, governance doesn't mean human-in-the-loop for everything. Agents can govern agents. Supervisory agents review outputs against policy. Quality agents validate completeness. Orchestration agents manage workflow sequencing. Escalation agents route exceptions to the right human at the right time. In practice, agents are better at managing and instructing each other than humans are at managing them. The key is understanding how to integrate the human where human judgment genuinely matters, and let AI handle the rest.

What This Means Now

Daniel Miessler has shown what personal agentic infrastructure looks like when built properly — goal-oriented, context-rich, skill-based, governed, and self-improving. The next frontier is the same architecture at enterprise scale.

The starting point is understanding your operating model. What are the inputs and outputs within your organisation? What are the roles, the decision rights, the policies, the quality standards? How can you rationalise these and mirror them with agents? That's the work that makes everything else possible.

The people-versus-process argument is over. You need both. You always needed both. AI agents make it possible to have both without compromise — governance without friction, agility without chaos, and an organisation where doing the right thing the first time is simply how work gets done.

The hive is coming. The question is whether your organisation will be a governed ecosystem that delivers — or an ungoverned one that generates expensive rework.

If you're working on agentic transformation, organisational digital twins, or governed AI at enterprise scale, I'd like to hear how you're approaching it. What's working? Where are the gaps?

#AIAgents #DigitalTransformation #EnterpriseAI #ProcessGovernance #AgenticAI